We describe CURIE, a scientific benchmark for long-Context Understanding, Reasoning, and Information Extraction that evaluates the efficacy of large language models in solving scientific problems and assisting scientists in real-world workflows. The ability to build on the collective knowledge in scientific literature is essential to the advancement of science. This requires not only deep domain expertise and reasoning skills, but also the capacity to apply that knowledge to a particular problem. Commonsense reasoning, language comprehension, coding, mathematics, and scientific question-answering have all been demonstrated to be covered by large language models (LLMs). As LLMs transition from merely surfacing knowledge to reasoning and actively solving problems, their application to scientific endeavors holds immense potential, promising to revolutionize how research is conducted and understood.

To realize this potential, LLMs’ abilities to deal with the complexities of scientific tasks will need to be thoroughly evaluated. The capacity of models to comprehend and reason about long-form, context-rich scientific information, including multimodal content in figures and tables, as well as the reasoning processes models employ to select the appropriate tools to address the issues at hand, will need to be measured. However, the current science LLM benchmarks frequently focus on short-form questions and multiple-choice responses, which primarily test knowledge recall and reasoning ability. To address this gap, we propose several new benchmarks and datasets to measure the ability of LLMs in seeking information and solving problems where additional context is available. In our forthcoming paper, titled “CURIE: Evaluating LLMs on Multitask Scientific Long-Context Understanding and Reasoning,” which will be presented at ICLR 2025, we examine six scientific fields for tasks that test long-context understanding, reasoning, information extraction, and aggregation capabilities. Similar to this, we presented “SPIQA: A Dataset for Multimodal Question Answering on Scientific Papers” at NeurIPS 2024. This dataset evaluated LLMs’ ability to back up their responses to questions with figures and tables from scientific papers. Alongside this dataset, we also created a benchmark test set and evaluated multimodal LLMs on the task. Additionally, at the MATH-AI workshop at NeurIPS 2024, we shared “FEABench: Evaluating Language Models on Multiphysics Reasoning Ability” in which we proposed a task to measure the ability of LLM agents to simulate, reason, and solve physics, mathematics and engineering problems using finite element analysis (FEA) software.

CURIE, a multitask benchmark for scientific reasoning

CURIE is designed to evaluate LLMs on scientific problem solving across six disciplines: materials science, condensed matter physics, quantum computing, geospatial analysis, biodiversity, and proteins. It has ten difficult tasks that require domain knowledge, comprehension of lengthy context information, and reasoning in multiple steps. The tasks in CURIE cover a range of scientific workflows, including information extraction, reasoning, concept tracking, aggregation, algebraic manipulation, multimodal understanding, and cross-domain expertise, all performed within the context of full-length scientific papers. CURIE intends to evaluate the potential of LLMs to assist scientists in their day-to-day workflows by requiring them to be proficient at such realistic tasks. Experts in their fields were integral to the benchmark’s development at every stage. To begin, they assisted in sourcing pertinent research papers from their respective fields and helping to define and identify tasks that accurately represent real-world scientific workflows. After that, they contributed to the creation of in-depth ground truth responses, prioritizing completeness, nuance, and accuracy. Finally, they quantified task difficulty by rating each example based on key features. We then selected evaluation metrics and confirmed their correlation with the experts’ subjective assessments of model responses compared to the ground truth answers. An overview of the benchmark’s specific tasks can be found in the table below. Evaluations based on models and programs Ground-truth annotations in a variety of formats, such as JSONs, latex equations, YAML files, or free-form text, can be found on a wide range of CURIE tasks. Evaluating free-form generation is challenging because answers are often descriptive, and even when a format is specified, as in most of our cases, the response to each field can have differing forms. Materials grid points, for instance, may be specified as “[p, q, r]” or “p q r” at different times.

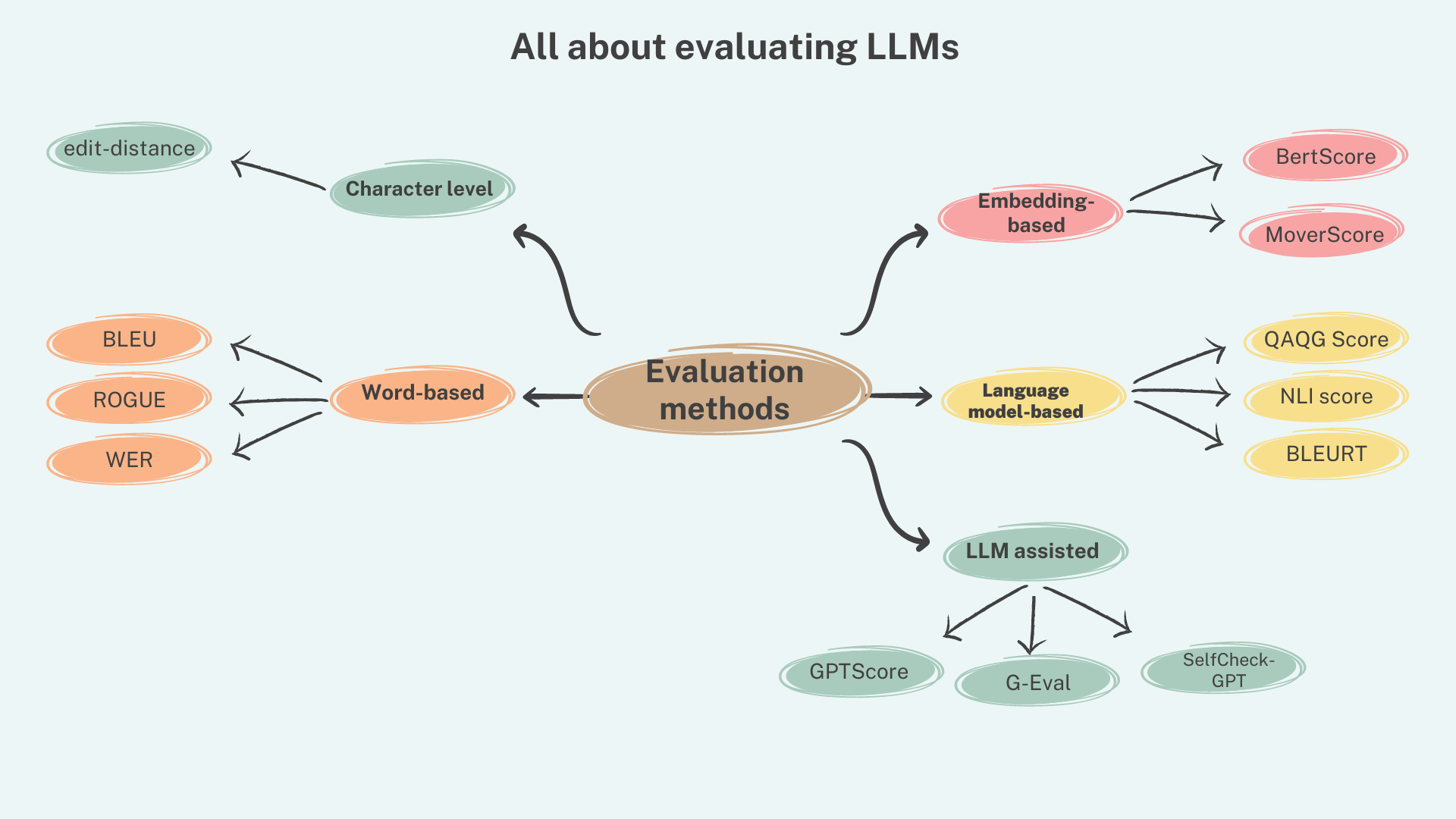

As a result, we propose two model-based evaluation metrics in addition to programmatic metrics like ROUGE-L, intersection-over-inion (used for BIOGR), and identity ratio (used in PDB). (1) The LMScore prompts an LLM to inquire about how closely the predictions match the ground truth on a three-point scale: “good” for predictions with few minor errors, “okay” for predictions with many minor errors, and “bad” for predictions with many major errors. To arrive at a final confidence, we take into account the weighted average of the tokens’ log-likelihood scores. (2) LLMSim: Is used for retrieval tasks where we ask the model to exhaustively extract many details, e.g., descriptors, properties and values of materials from a research document, and provide as output an unordered list of dictionaries or records. We employ a chain-of-thought (CoT) prompt in which we instruct the LLM to examine each ground-truth record and select the predicted records that accurately match each field (key) and value of the ground truth. We can then measure precision and recall for the retrieval task and calculate the mean average precision, recall, and F1 scores for all documents after matching the ground-truth records with the predicted records. We evaluated popular long-context closed- and open-weight models on CURIE and found that there is substantial room for improvement across all models and tasks, in particular on DFT, MPV and GEO tasks that require exhaustive retrieval of multiple values and aggregation. Expe rts provide a comprehensive analysis of the findings in the paper. Of note is that experts found model responses promising, particularly in extracting details from a scientific paper, grouping them appropriately, and generating responses in a desired format.

Overall, scientists’ workflows will be enhanced and accelerated if LLMs perform better on such tasks. SPIQA:

A dataset for multimodal question answering on scientific papers

While CURIE evaluates scientific reasoning over long texts in different domains, comprehending multimodal content in scientific articles presents additional challenges. Figures and tables that have been meticulously crafted are frequently used to present key insights, motivation, and a simplified overview of the scientific methods. Thus, to independently evaluate the ability of LLMs to simultaneously reason over multiple figures and associated text in a scientific article, we introduce the Scientific Paper Image Question Answering (SPIQA) dataset and benchmark. We test LLMs’ long-context and multimodal capabilities with SPIQA.

Techosta Where Tech Starts From

Techosta Where Tech Starts From